This post was last updated 6 years 10 months 16 days ago, some of the information contained here may no longer be actual and any referenced software versions may have been updated!

This post was last updated 6 years 10 months 16 days ago, some of the information contained here may no longer be actual and any referenced software versions may have been updated!I have been working with PHP for about 6 years now and my first public facing development server is about the same age. It started life as a 32 bit 1GB Linode probably running Ubuntu v10.x, it survived a couple of OS upgrades and even a pseudo kernel shift to 64bit and ended life as a Linode 4096 running Ubuntu 14.x.

Maintaining servers whether they are physical or virtual is a real pain in the neck, as time goes by you develop apps, you install more and more software and your simple php development platform starts to become quite complex. When it comes to a major OS update e.g. Ubuntu 14LTS to 16LTS where mysql and php versions are changing then keeping all your (now live) websites, blogs and apps running can become a real challenge.

In an ideal world you would have multiple servers designed specifically for the apps they are running, in the real world when we are talking about personal websites, blogs and forums everything ends up running on the same server. The longer you postpone an operating system upgrade the harder it gets to upgrade, and whilst some people live by the ‘if it’s running don’t break it’ theory when developing apps you want to make sure you are staying up to date with best practice and are aware what is being deprecated or no longer supported. Again the longer you ignore updates to PHP the harder it will be to update your PHP apps!

It’s a bit of a catch 22 situation, you need new server hardware, or an operating system but you can’t just upgrade in one go because too many apps will be affected resulting in a lot of downtime whilst you fix all the broken code and you can’t migrate to a new server because you know some of your apps won’t run.

As soon as you build a new server the process starts again and the next upgrade becomes just as challenging.

What is the solution? – containerisation.

containerisation with docker

Docker containers wrap up a piece of software in a complete filesystem that contains everything it needs to run: code, runtime, system tools, system libraries – anything you can install on a server. This guarantees that it will always run the same, regardless of the environment it is running in.

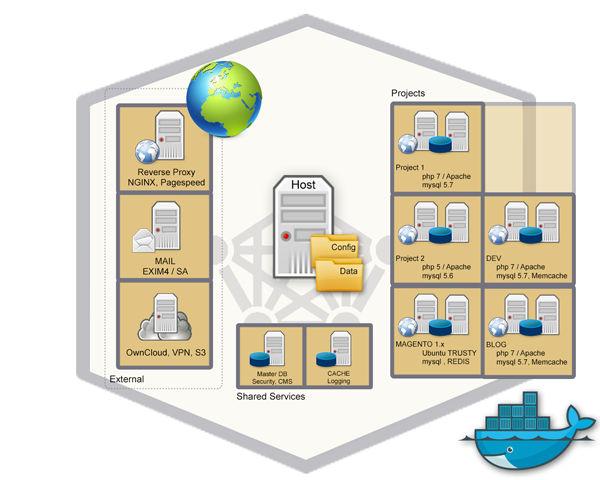

By moving each of my apps and server services into a container customised for the requirements of that app, be it a specific PHP or MySQL version or operating system I containerised (virtualised) all my server applications: php, mail, cloud storage, vpn, webapps, blogs, forums, Magento etc. into new zero administration servers which I wil never have to worry about upgrading!

All the old apps you know are not compatibile with the latest version of PHP (i.e. Magento 1.x) can stay running in a container customised for their requirements. The docker host server can now run a very minimal operating system, ideally with just Docker installed making future upgrades pretty uncomplicated.

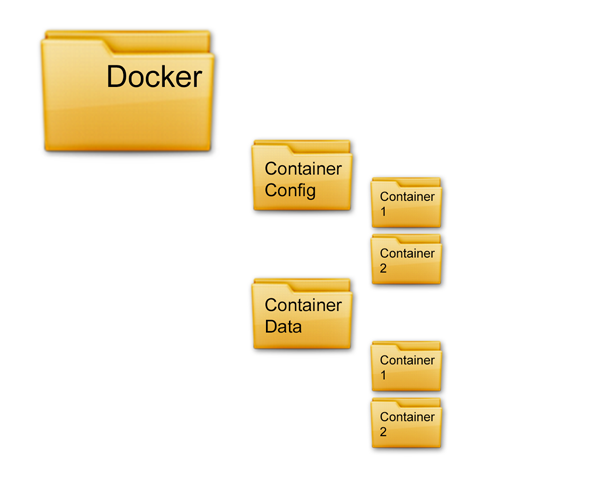

Because all your apps are in containers moving to a new server is as simple as moving the container configuration and data files to the new server and starting the containers, you no longer have to worry about upgrade compatibility issues as your application is running in an container image that in theory will never change!

The golden rule to follow is to ensure that you always treat your containers as volatile storage and never store live data in them, or commit configurations that are not included in your configuration files.

By separating your application data into persistent docker volumes located in logically named folders basic housekeeping tasks like log management and backups are now also simplified. With all app data located in data folders, your container (application) data can be easily backed up. Log files are also easy to manage because they are consolidated into Docker’s central logging engine, all your log data can easily be centrally stored and analysed.

In practice Docker just works, the containers start and stop in seconds, and in some cases your apps will run much faster. There is a lot of work in configuring the container to replicate the environment you want, but once your Docker file is written it becomes a good template for future apps.

It’s easy to get carried away containerising everything so you have to monitor the host server resources you are using, I ended up with over 40 running containers which maxed out the RAM in a 4GB Linode resulting in containers crashing because they suddenly ran out of memory. I upgraded to an 8GB Linode where the ram usage now averages at just over 4GB for 40 running containers leaving me room for expansion.

I recommend building your containers using docker compose. Docker compose lets you keep your container configuration in one folder and provides an easy command line interface to start, stop and build containers. Here for example is the docker composer file for my Exim4 mail server container.

Show Exim4 docker-compose.yml# EXIM4 + SPAMASSASIN

#

# Mail relay with configurable relay virtual domains and smtp auth

#

#

version: ‘3’

services:

exim4:

build: ./exim4/

hostname: host

domainname: mail.com

# mail server from these networks :

networks:

– server

ports:

– "25:25"

expose:

– 465

– 587

volumes:

– ./virtual:/etc/exim4/virtual

restart: always

healthcheck:

test: ["CMD-SHELL", "echo ‘QUIT’ | nc -w 5 mail.server.co.uk 25 > /dev/null 2>&1 && echo ‘SMTP OK’"]

interval: 1h

timeout: 10s

retries: 3

sa:

build: ./sa/

hostname: sa

domainname: server.co.uk

depends_on:

– exim4

networks:

– server

expose:

– 783

restart: always

# define networks

networks:

server:

[/text]

Here you see the docker compose file creates two services, Exim4 and Spam Assassin. The file also configures health checks and networking. More about building containers such as this Exim4 mail server with spam assassin will follow in another post.

Comments